3D animation. I used videos I took of my robot unknowingly vacuum carrying various items on its back as it vacuums. This video is part of my ongoing inquiry into what what constitutes work and our relationship with it - the various ways in which we engage with work, often using technologies and domesticated animals as proxies and conduits.

On Opacity

Sitting between video essay and exhibition, this work peruses various ways that people hide themselves and objects for various ends.

Opacity can be unsettling or frightening, whether it is something sinister like soldiers in military camouflage waiting to ambush someone or just unsettling like a mysterious object wrapped in something opaque. On the other hand it is highly sought after for safety.

To complicate things, it can also be quite (often darkly) humorous that people attempt to hide with various degrees of success, attempting to merge with bushes and the like. Monty Python's How Not to Be Seen (from Flying Circus Season 2, 1970) and Hito Steyrl's HOW NOT TO BE SEEN: A FUCKING DIDACTIC EDUCATIONAL .MOV FILE, 2013 are inspirations.

Some of the questions the work attempts to asks are: who gets to suffer being watched (monitored, targeted) and, conversely, who gets to be seen (in full complexity and safety)? Who gets to decide what is hidden and what isn’t? Playing on the desire to camouflage I nod toward ever being’s desire for or right to opacity, inspired by Éduard Glissant’s ontology of relation (‘On Opacity’, Poetics of Relation, 1998), which is where the title comes from, and other artists who work with ideas around both hiding and revealing the identity (Nick Cave’s sound suits, for example) as a way to put forth a more complex view of each other.

Video uses free 3d objects found in Blender resources.

Sounds I created using samples:

Granular synthesis of Tales of Hoffman Barcarole. (Cuent - KATHRYN MEISLE and MARIE TIFFANY) from the Internet Archive

Granular synthesis of hippos found on FreeSound.org

Care, Connection, Control Series

Exploring the relationship between care and exploitation. I used an Instagram emoji of hands to move over a video i took of the milk carton on which a family pets a cow. I then superimposed this video over an object of a milk crate, creating three layers of removal in order to frame this relationship between the animal and its owners/caregivers. Cinema 4D.

Triptych

In progress. A video collage using Paris protest footage from @Decolonizethisplace, cows in a Slovenian village (Velika Planina), and Pussy Riot’s invasion of World Cup field in 2017, all superimposed onto a 3d scan of a Medieval triptych. Orinigally inspired by the triple window fire of the Paris protest footage.

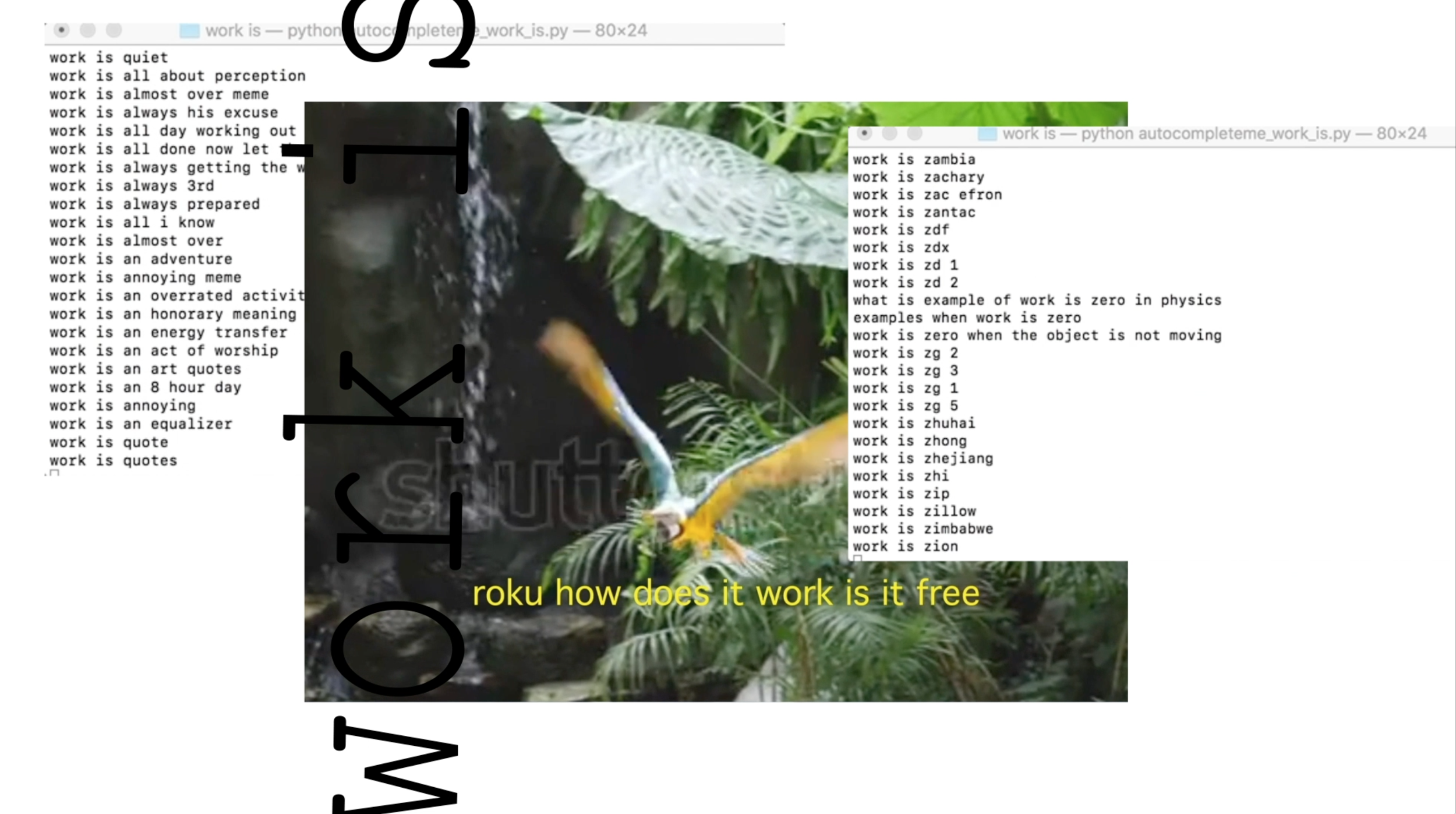

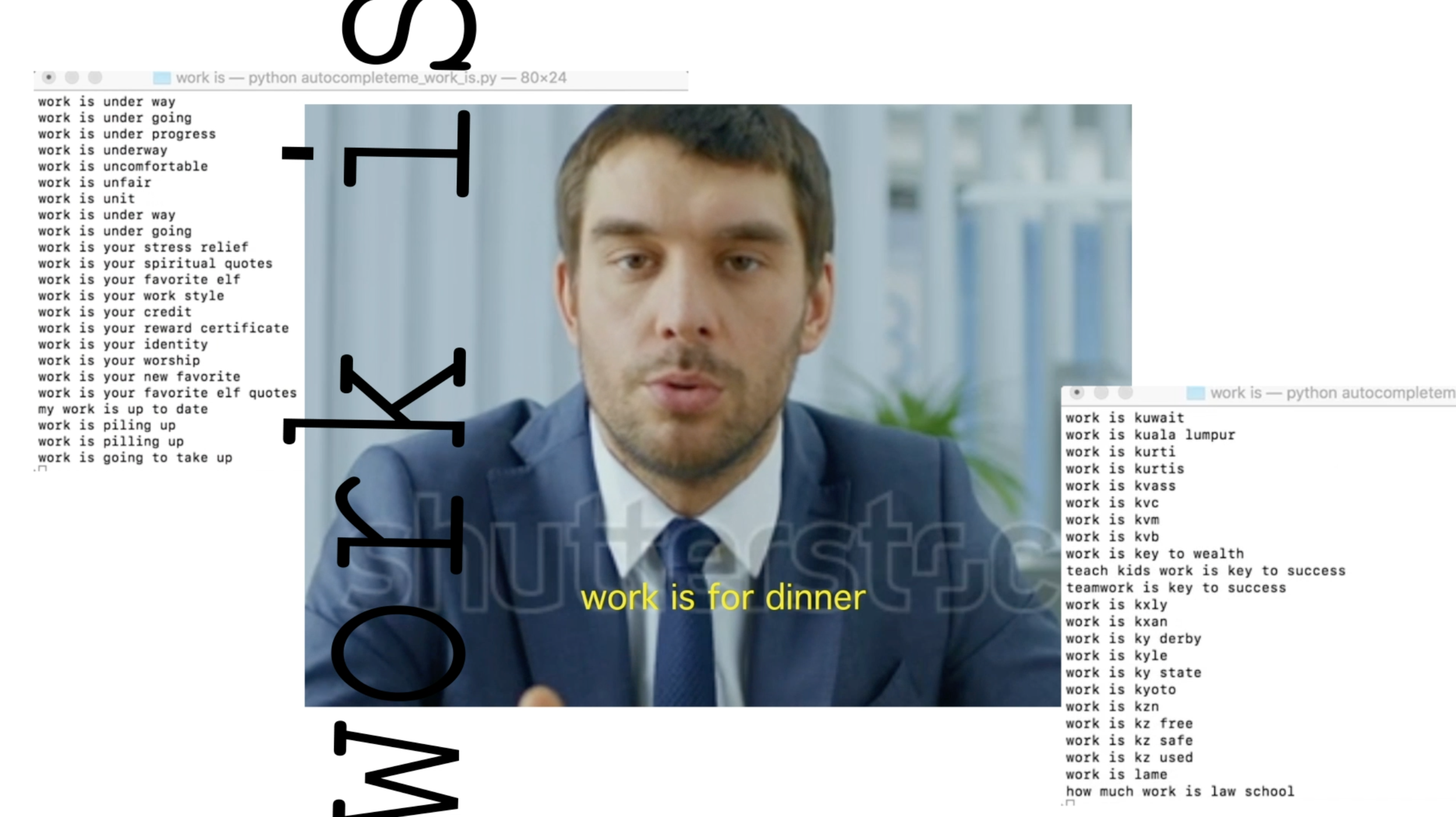

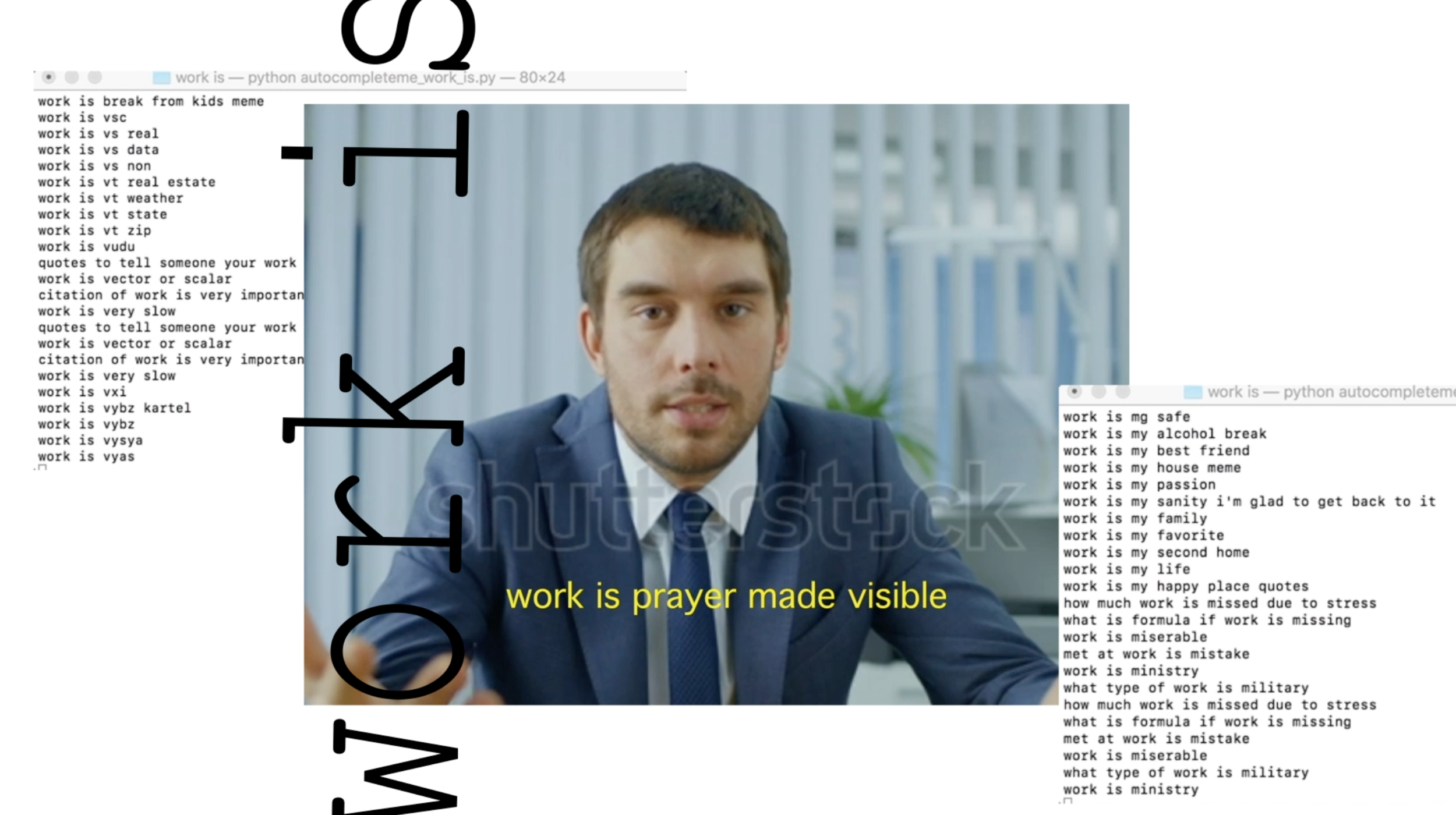

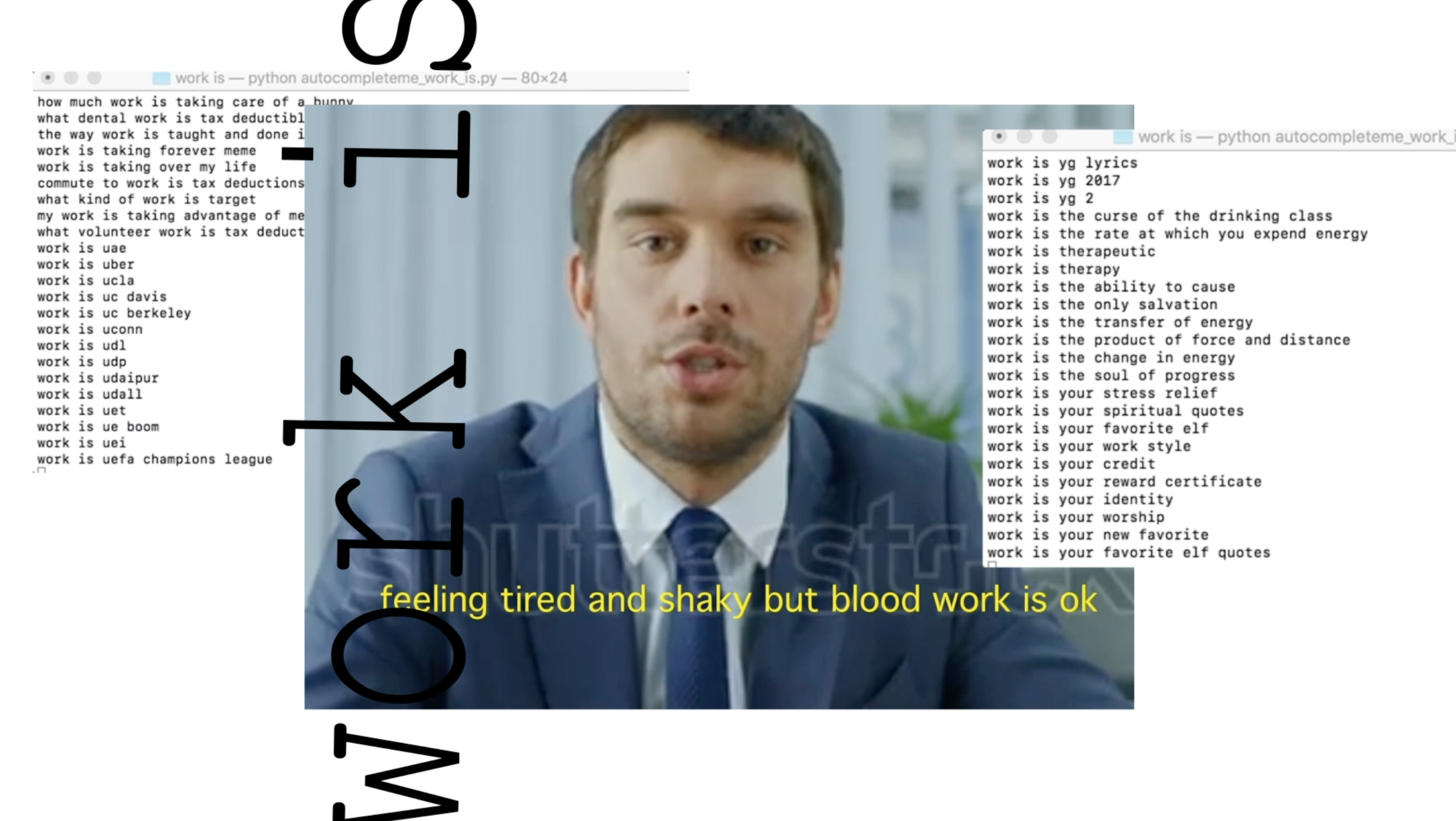

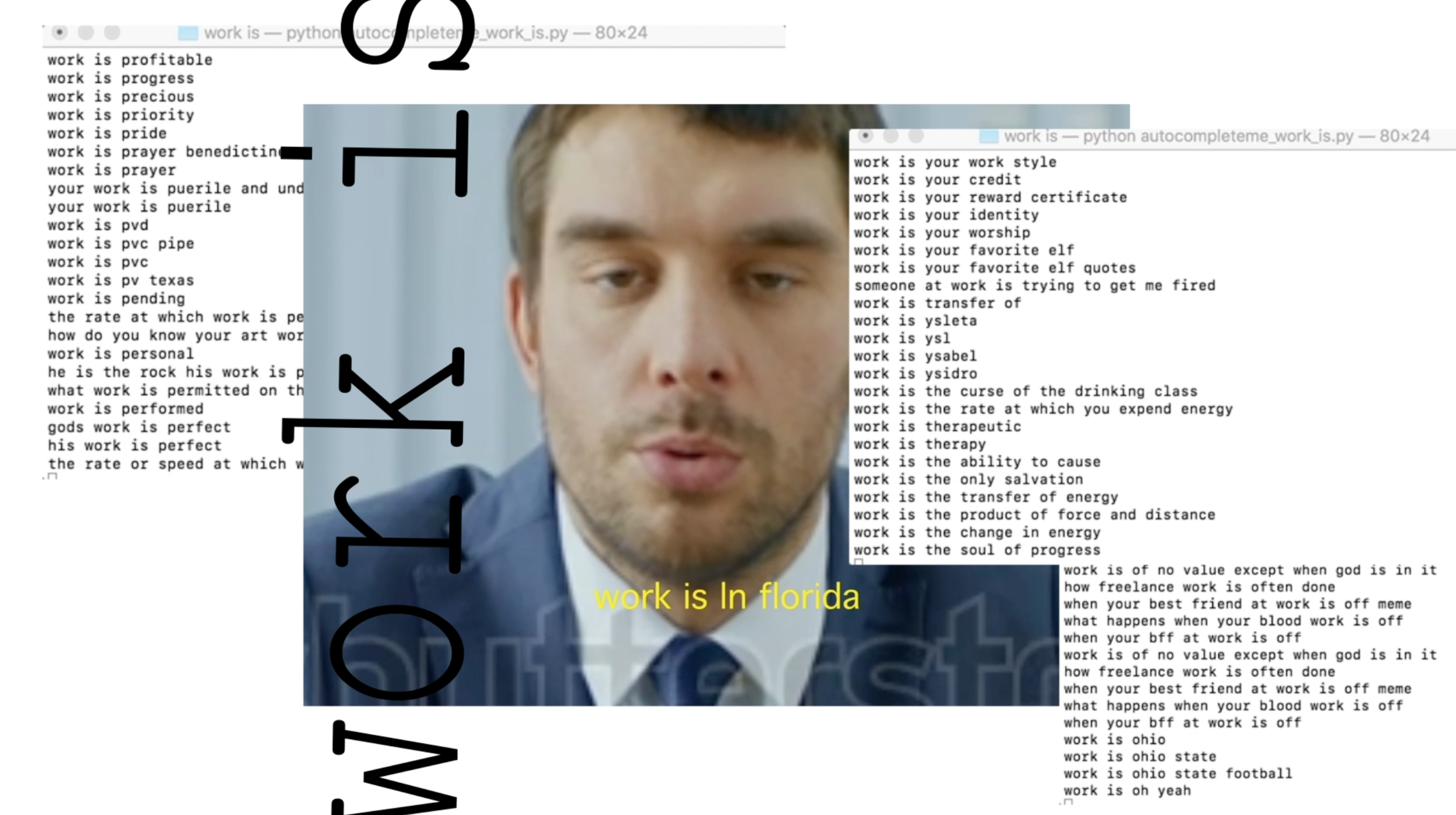

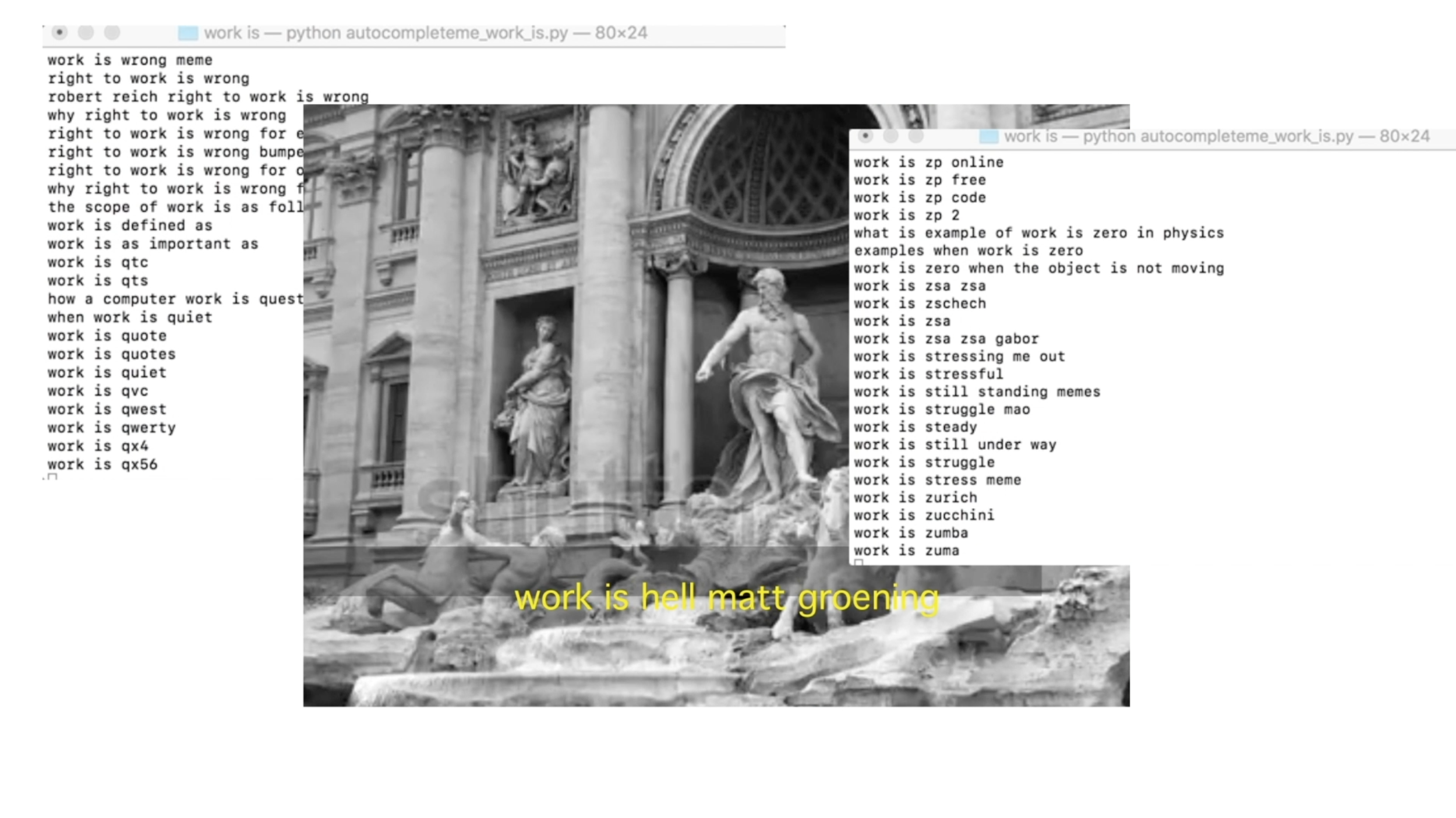

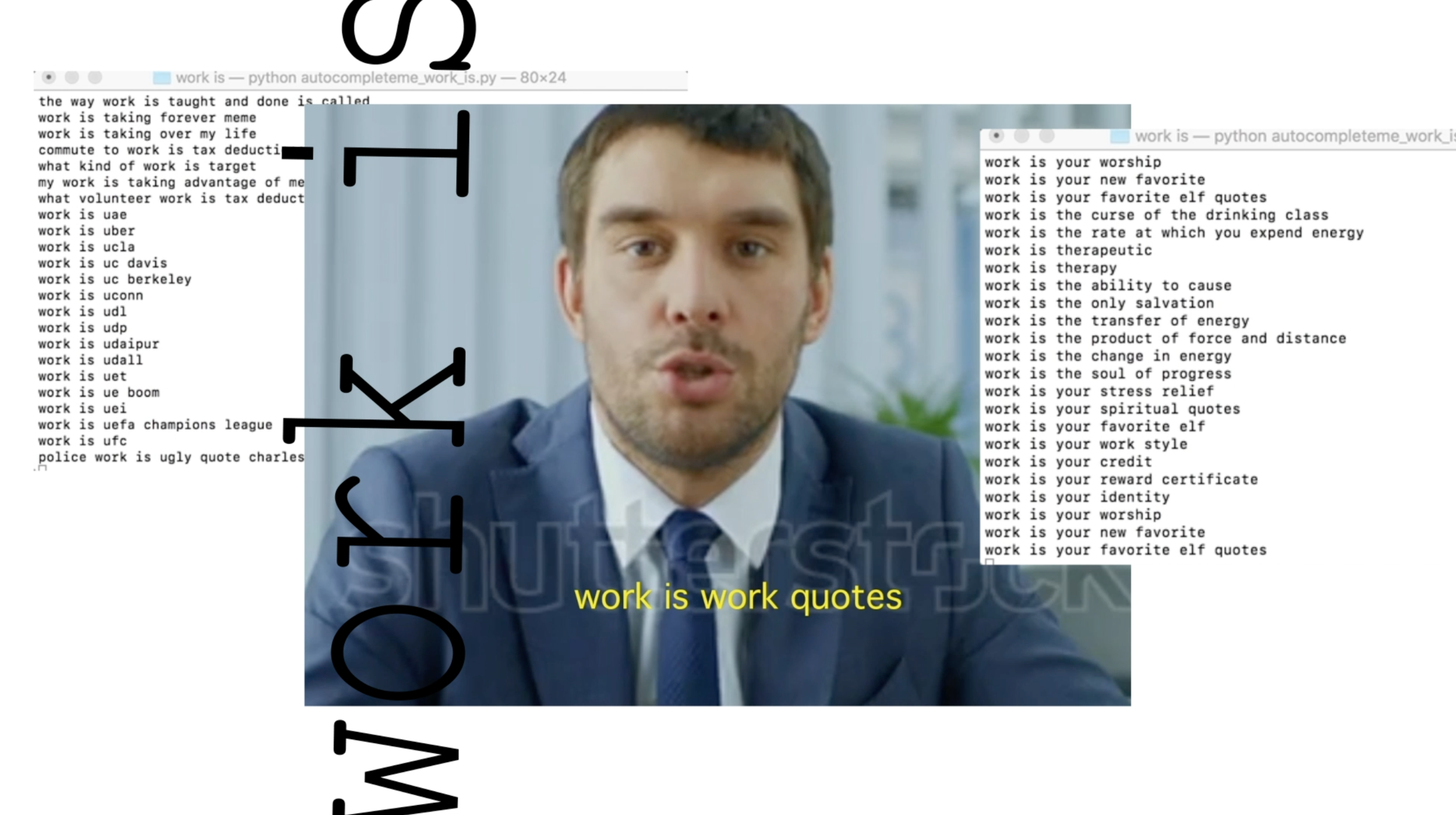

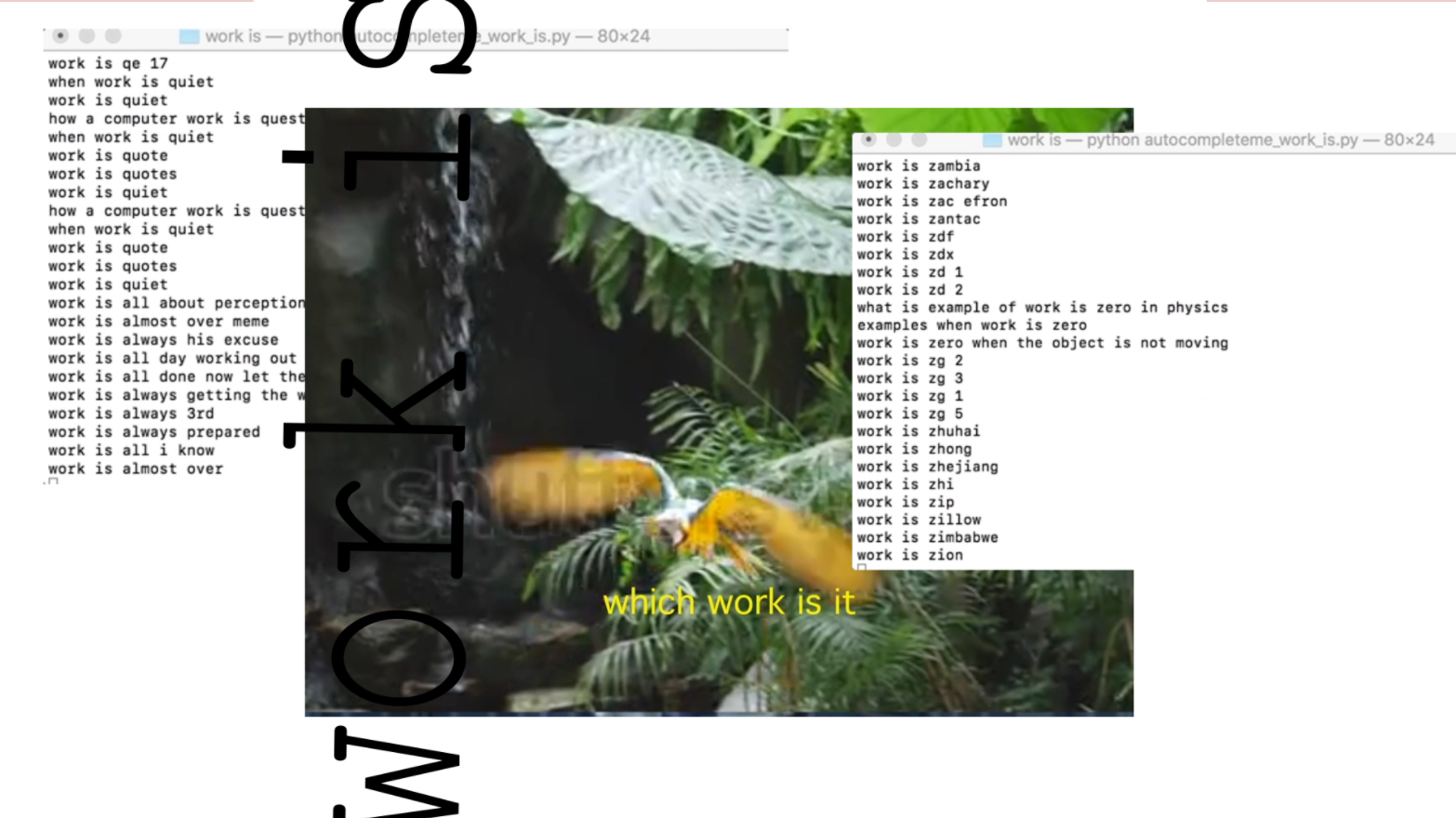

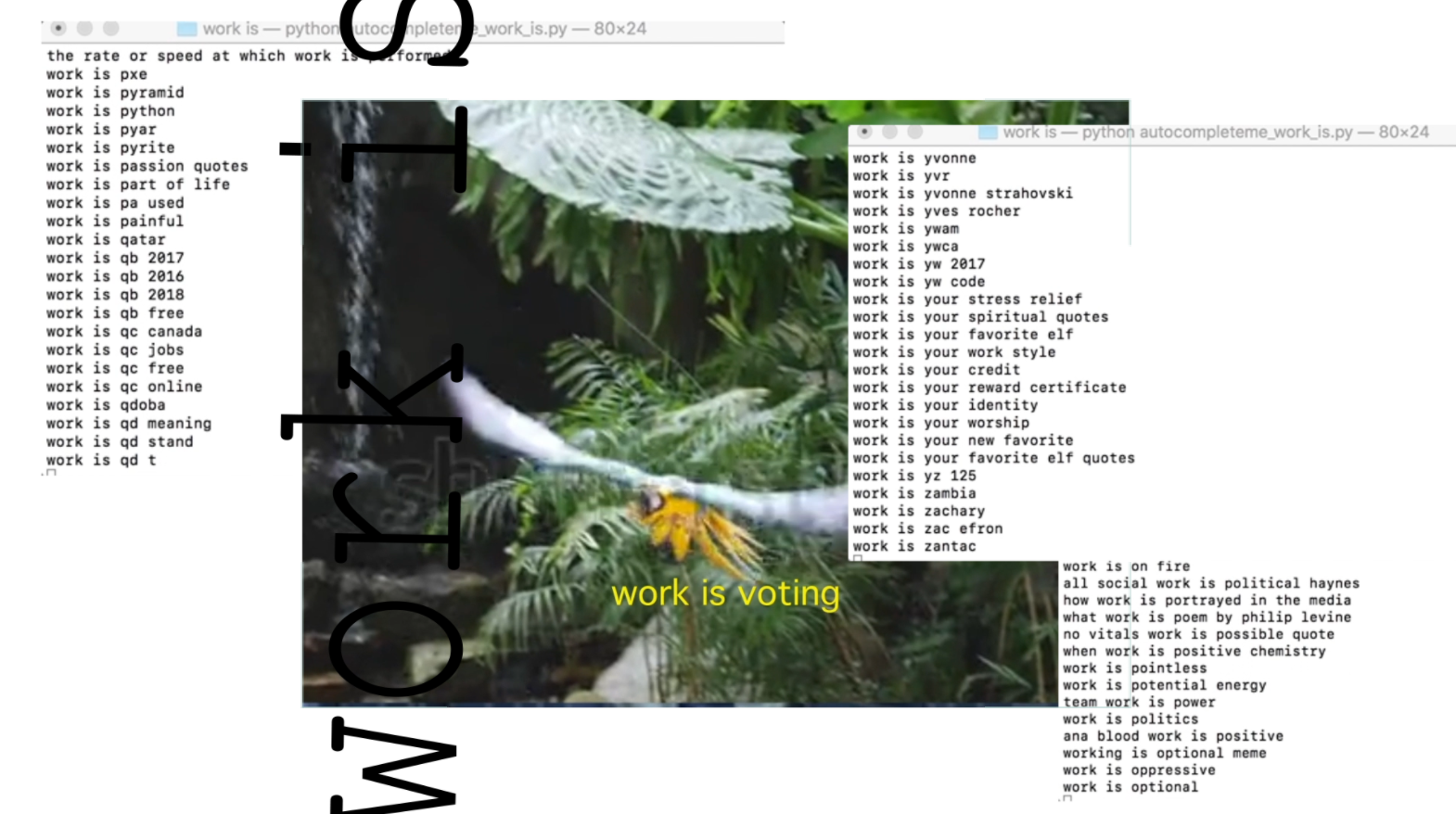

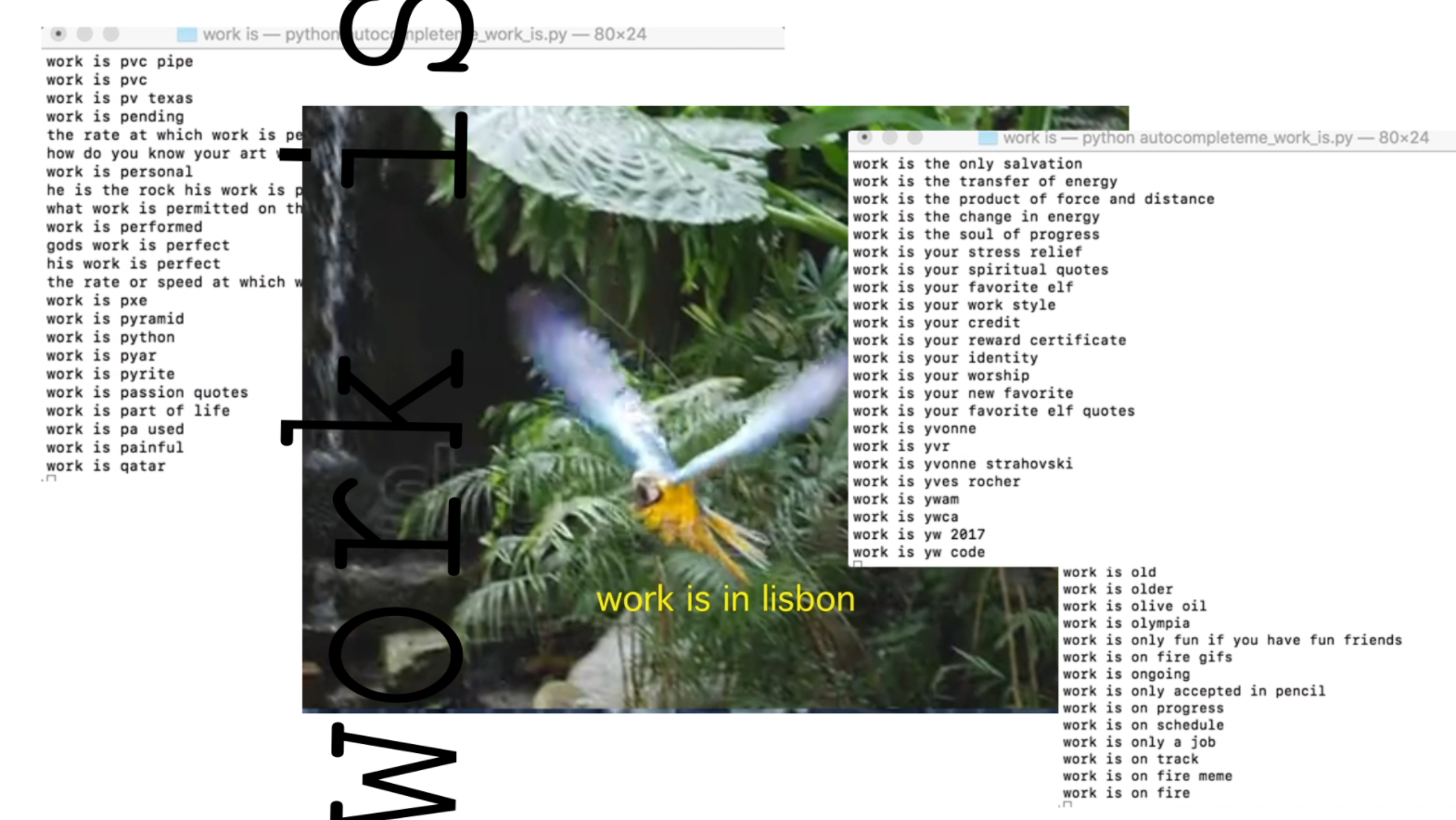

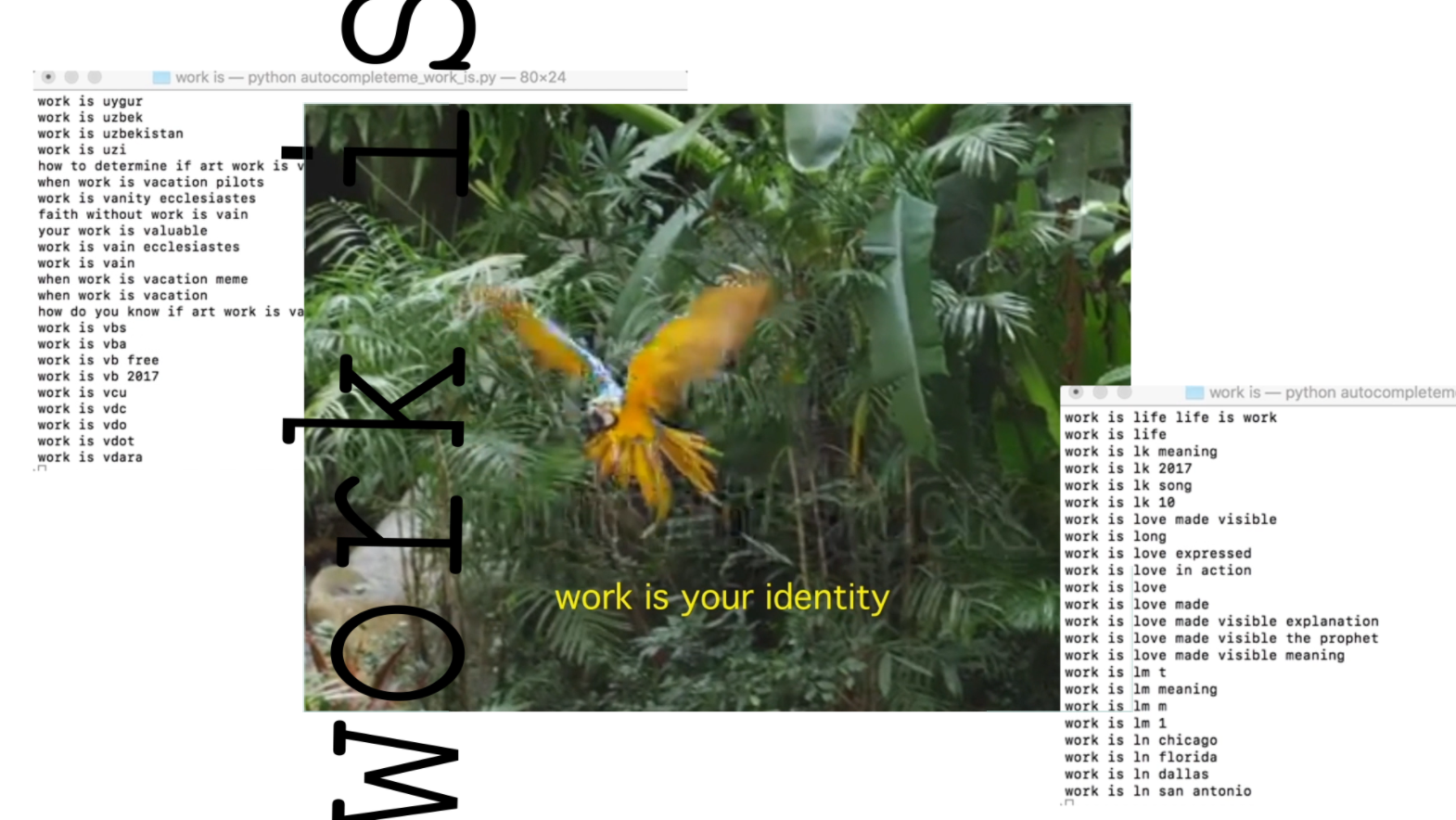

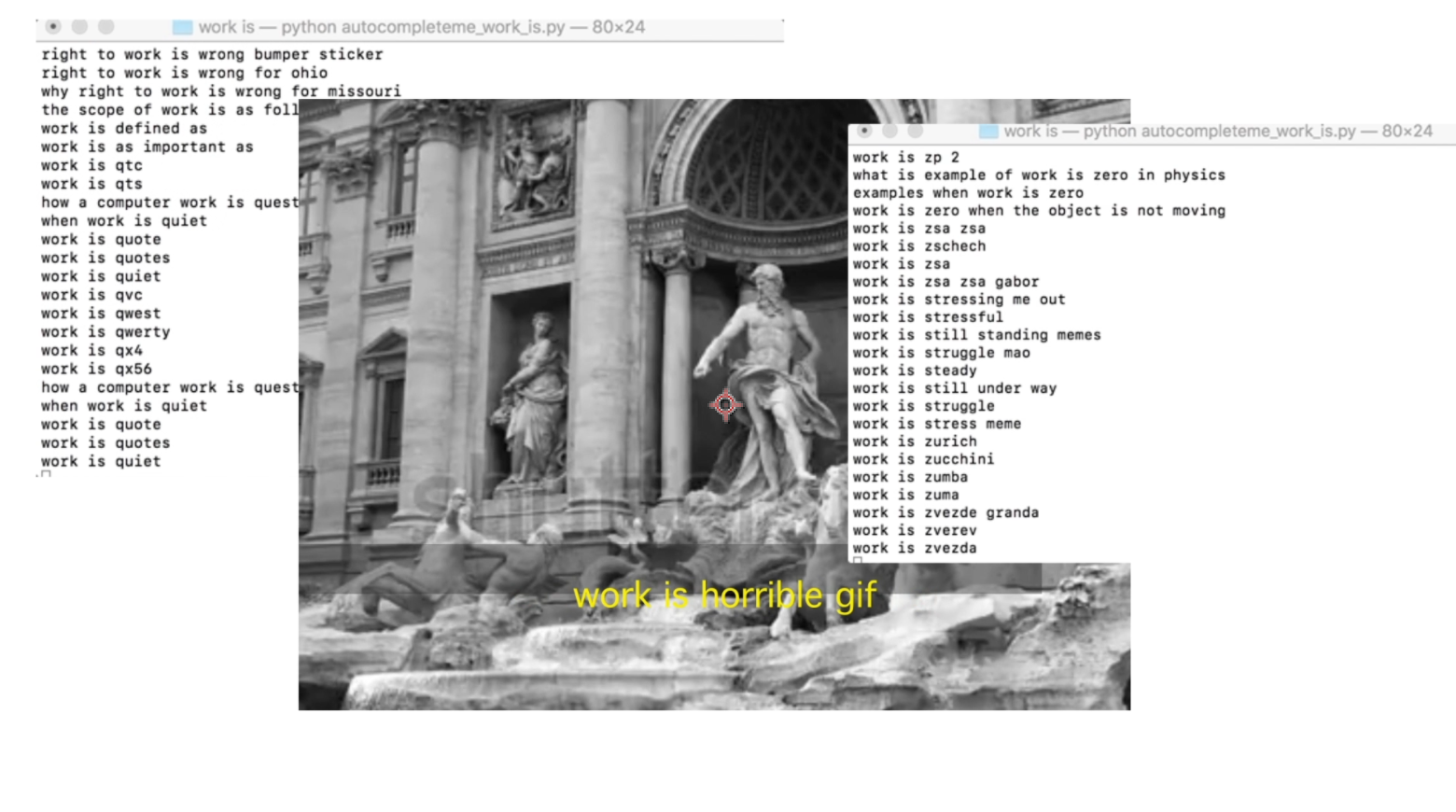

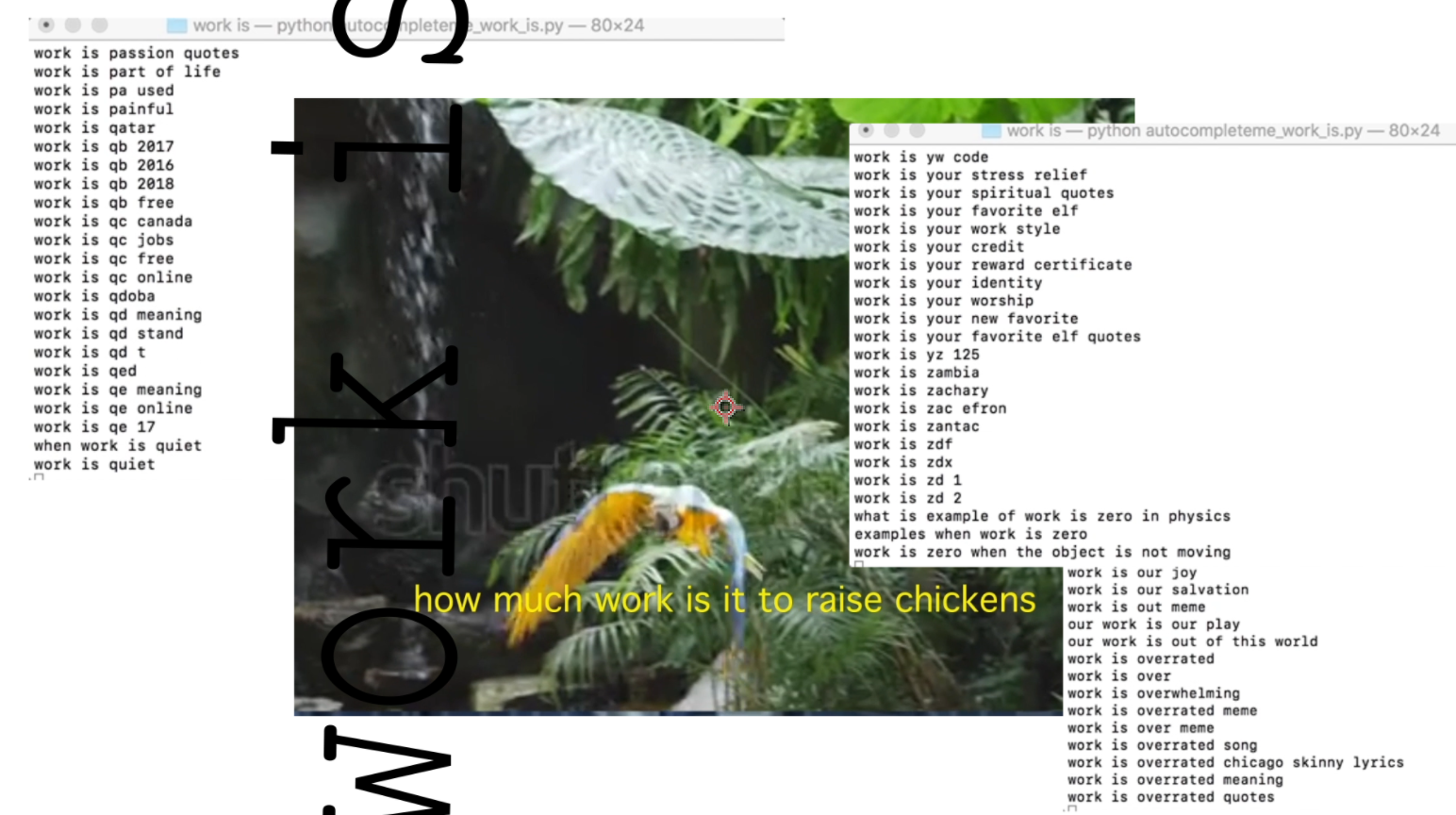

Work Is ____

This project is an inquiry into what work is. Interested in decoupling work from the wage, to free it from narrow capitalist valuation, my work often is an attempt to reframe how we (the “Work Society”) see work or, at least, to expand its definition.

I used Python to scrape the results of the search terms from a to z that followed the string "work is ____" and superimposed the resulting phrases on top of a video collage from watermarked Shutterstock footage. The watermark reminds the viewer that, just like the phrases, it is content scraped and assembled from the internet.

The footage down progressively throughout the seven minutes as if to make room for reflection or to invite the viewer to slow down along with it.

Sound deign: I made a granular synthesis of cello and a man singing from free sound.org (credits to follow). I also added brown noise.

This particular project was inspired by Sam Lavigne’s Why Do We Work So Hard and Ellen Nichols’ The Answer Is. It was Ellen’s code that I reworked for this project.

My other python projects use VidPy, Sam Lavigne’s library and you can find them and my code here (Joan of Arc and a Species Goodbye) and here (Bold & Shy).

Worlds

I used Cinema 4D to create an abstracted world of opacity, new identities, and other posthuman possibilities. This is part of a world-making project that speculates futures in figurative (and sometimes prefigurative) ways.

Inspired by Maurizio Cattelan's Gilded toilet at the Guggenheim Museum of Art in NY. Cinema 4d.

Another part of the world-making video project.

Please listen using the links below.

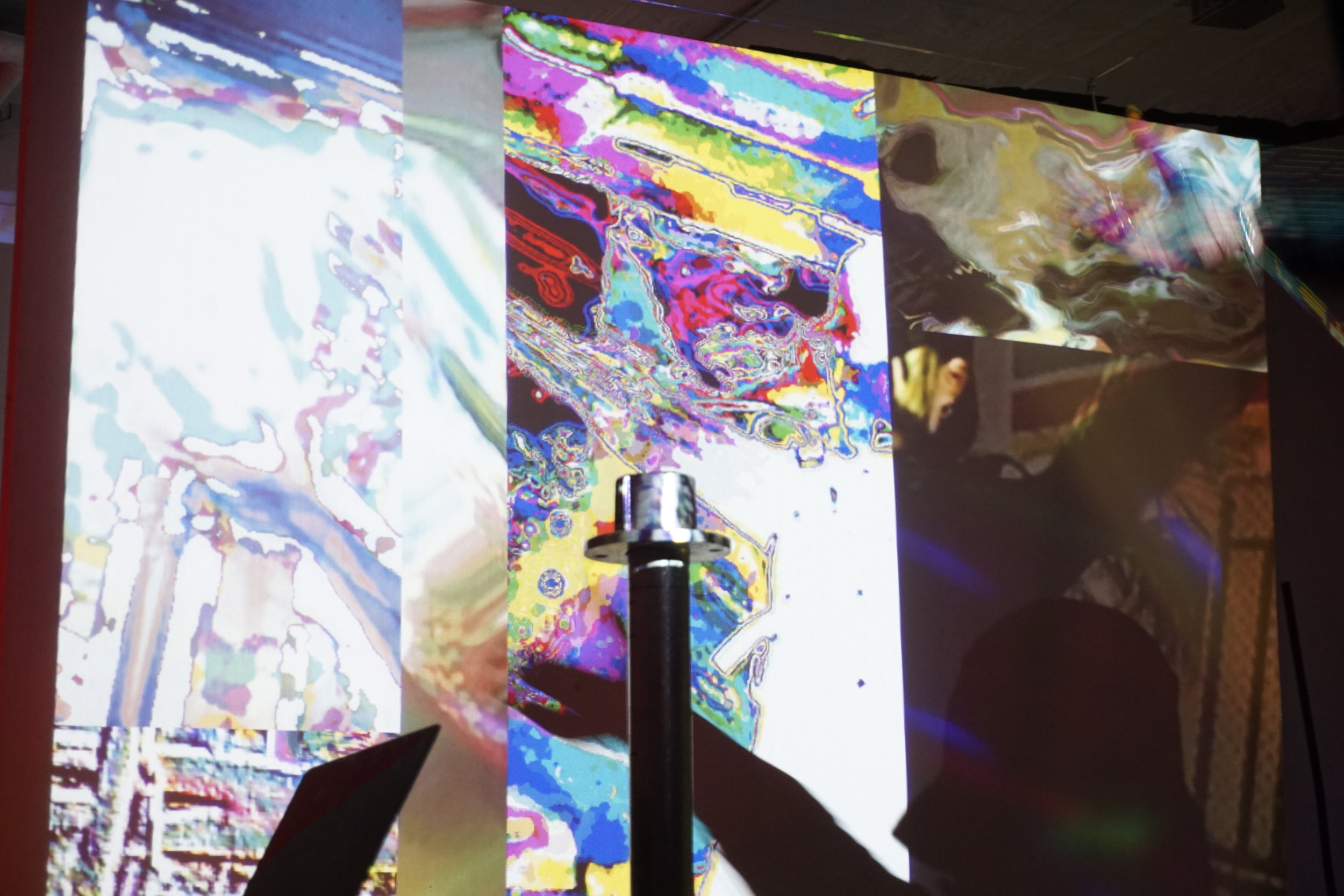

Pattern and Generative Sound Series

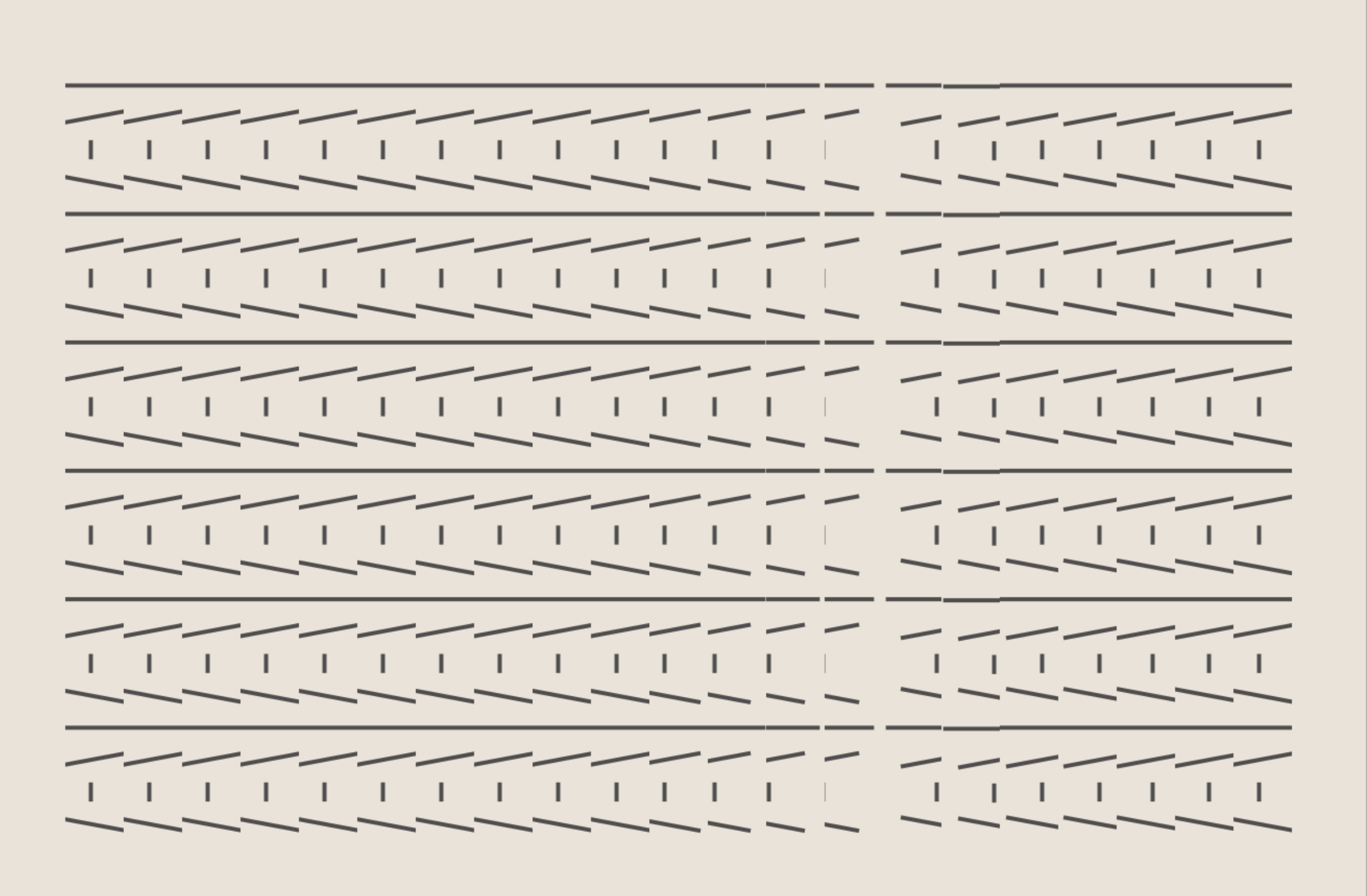

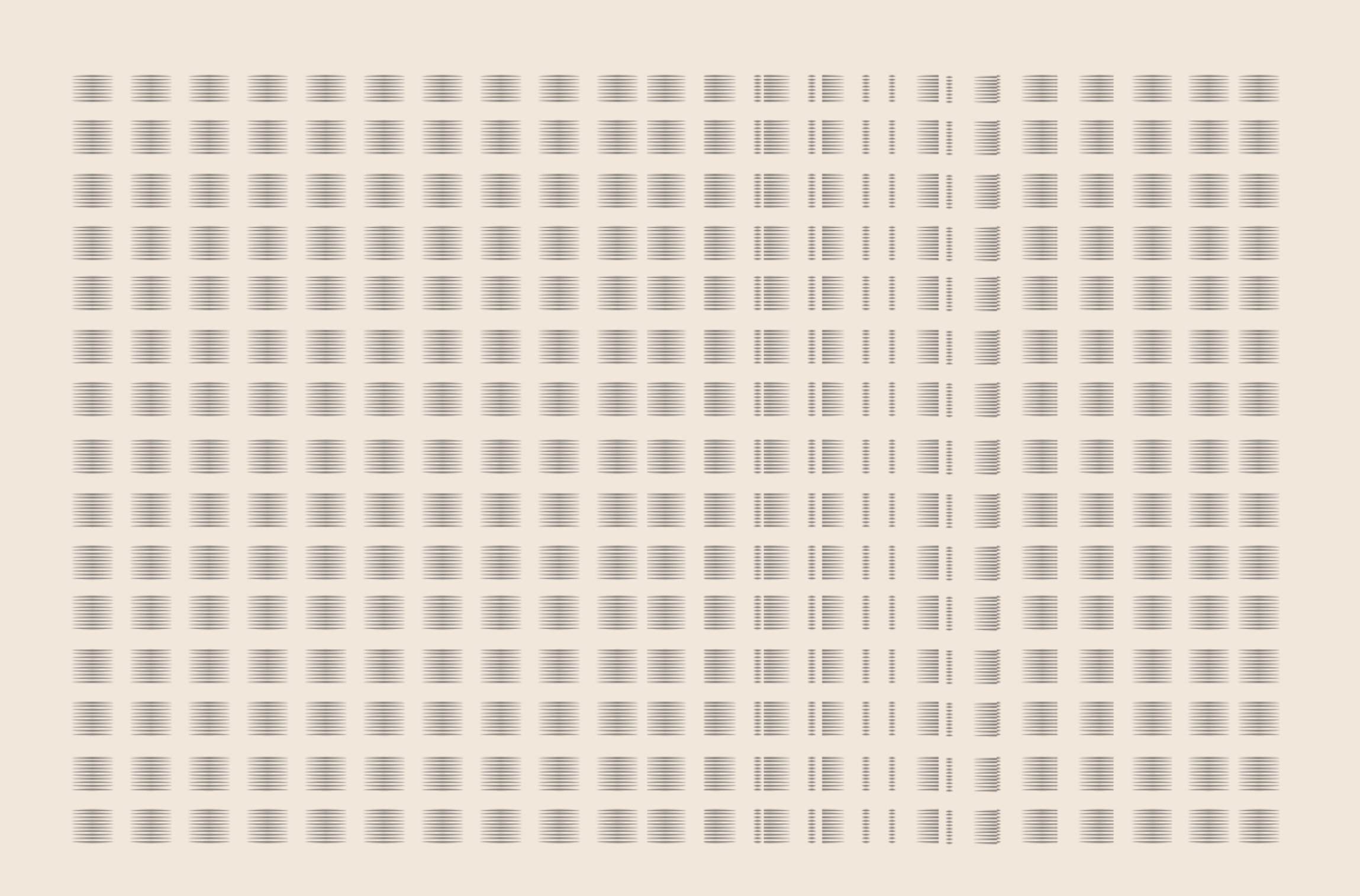

Using p5js and Tone.js, I created a series of compositions that reconfigure temporarily on input from Kinect, a motion sensor, and generate soothing and energizing rhythmic sounds that resonate the visual elements of the piece. The people in front of the projected visuals and sensor, in effect, create sonic compositions simply by moving, almost like a conductor in front of an orchestra but with more leeway for passive (if one simply walks by) or intentional interaction.

Composition 2: Allison's Use of Vim Editor

Mouseover version for testing on the computer.

In the editor using Kinect.

Composition 3: Isobel

In the p5js editor.

Composition 4. "Brother"

In the p5js editor.

For this synchopated piece I sampled sounds of tongue cliks and and claps found on freesound.org. No tone.js was used in this piece.

Composition 5. "Free Food (in the Zen Garden)"

For this composition I used brown noise from Tone.js.

In the p5js editor.

After using mouseOver for prototyping, I switched over to Kinect and placed the composition on a big screen to have the user control the pattern with the movement of the right hand instead of the mouse.

Sound

I'm uising Tone.js, written by Yotam Man.

Visuals

P5js and Adobe Illustrator.

Final Compositions

The next steps are to create more nuanced layers of interactivity that allow for more variation of manipulation of the sound and the visuals. I am envisioning the piece becoming a subtle sound orchestra that one can conduct with various hand movements and locations of the screen.

Initial Mockups

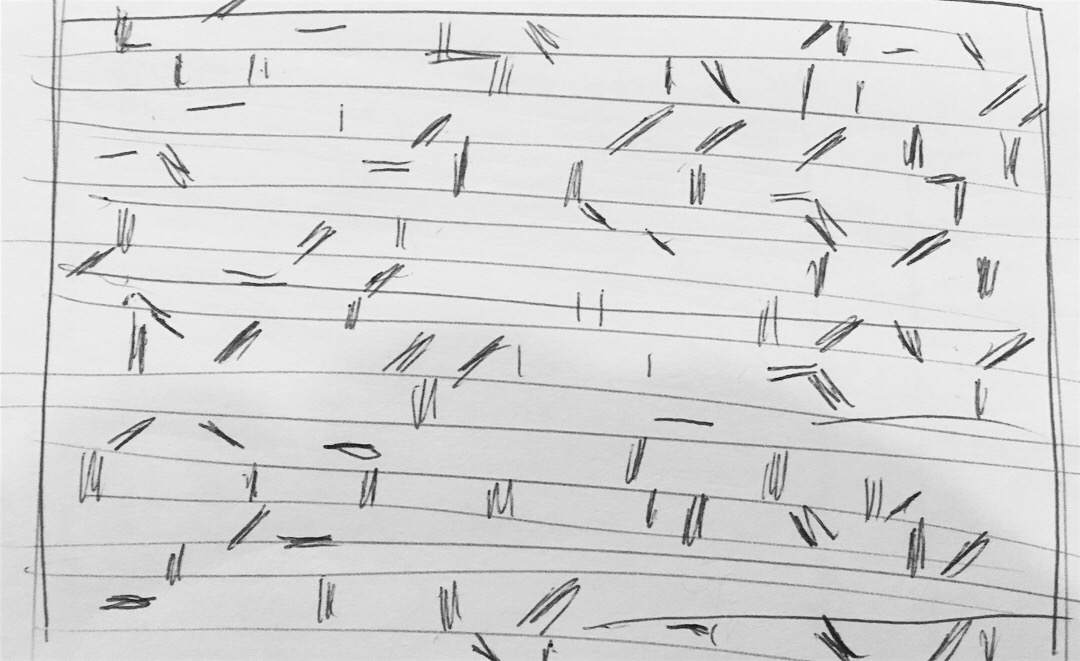

Sketches of ideas

Inspiration

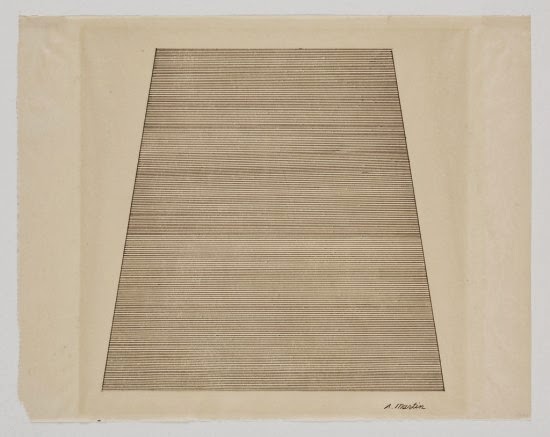

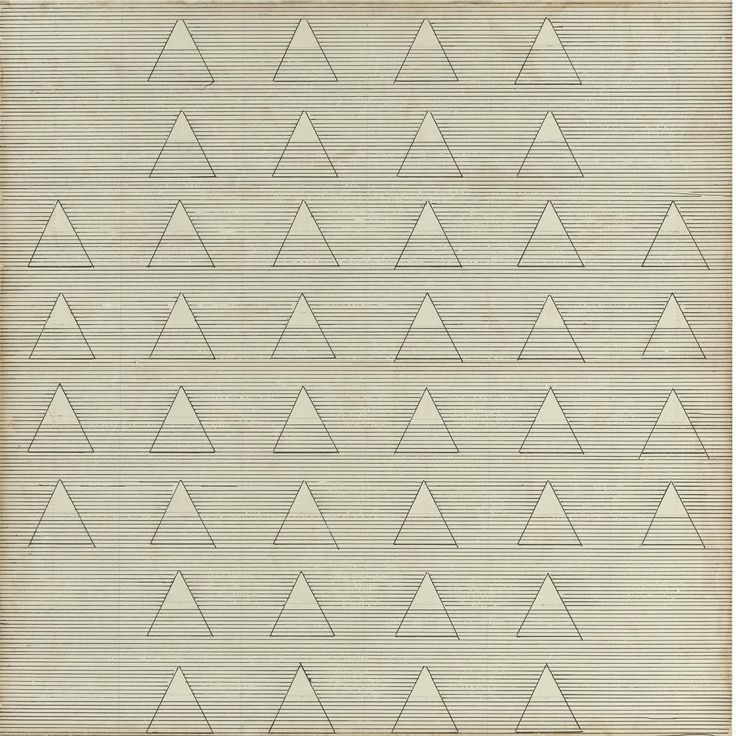

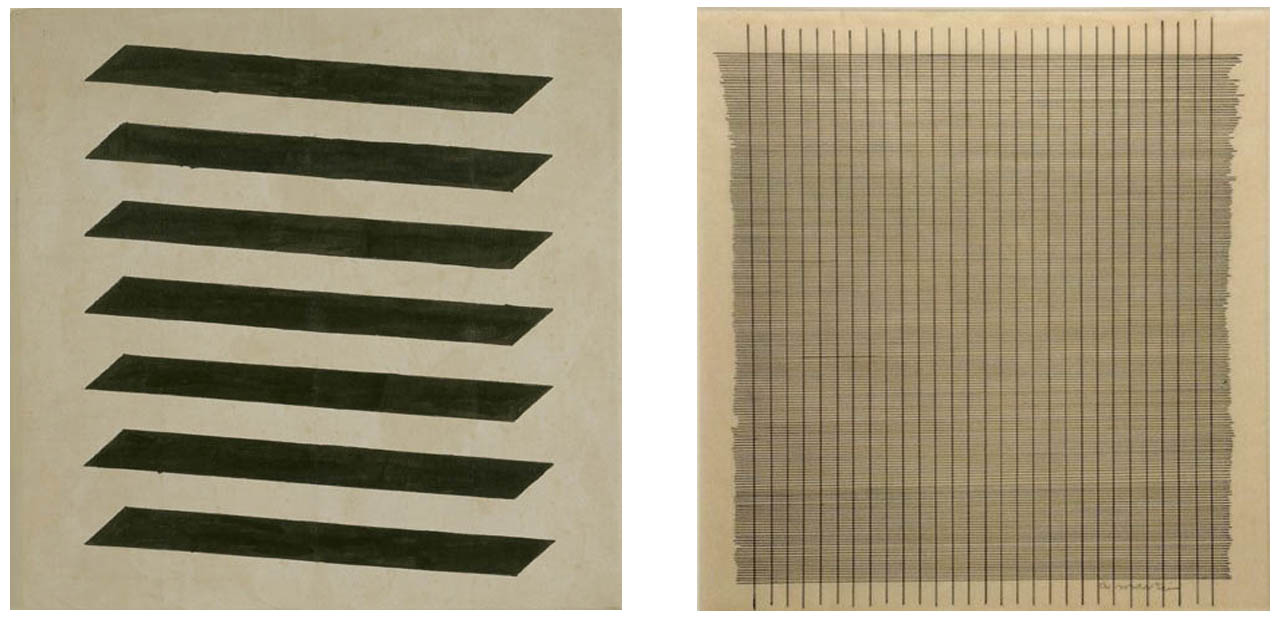

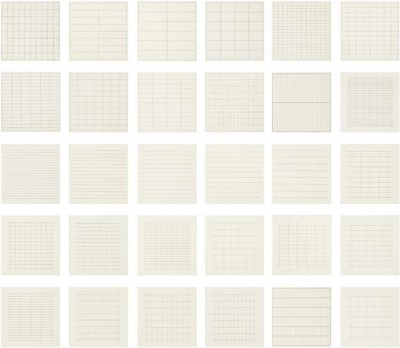

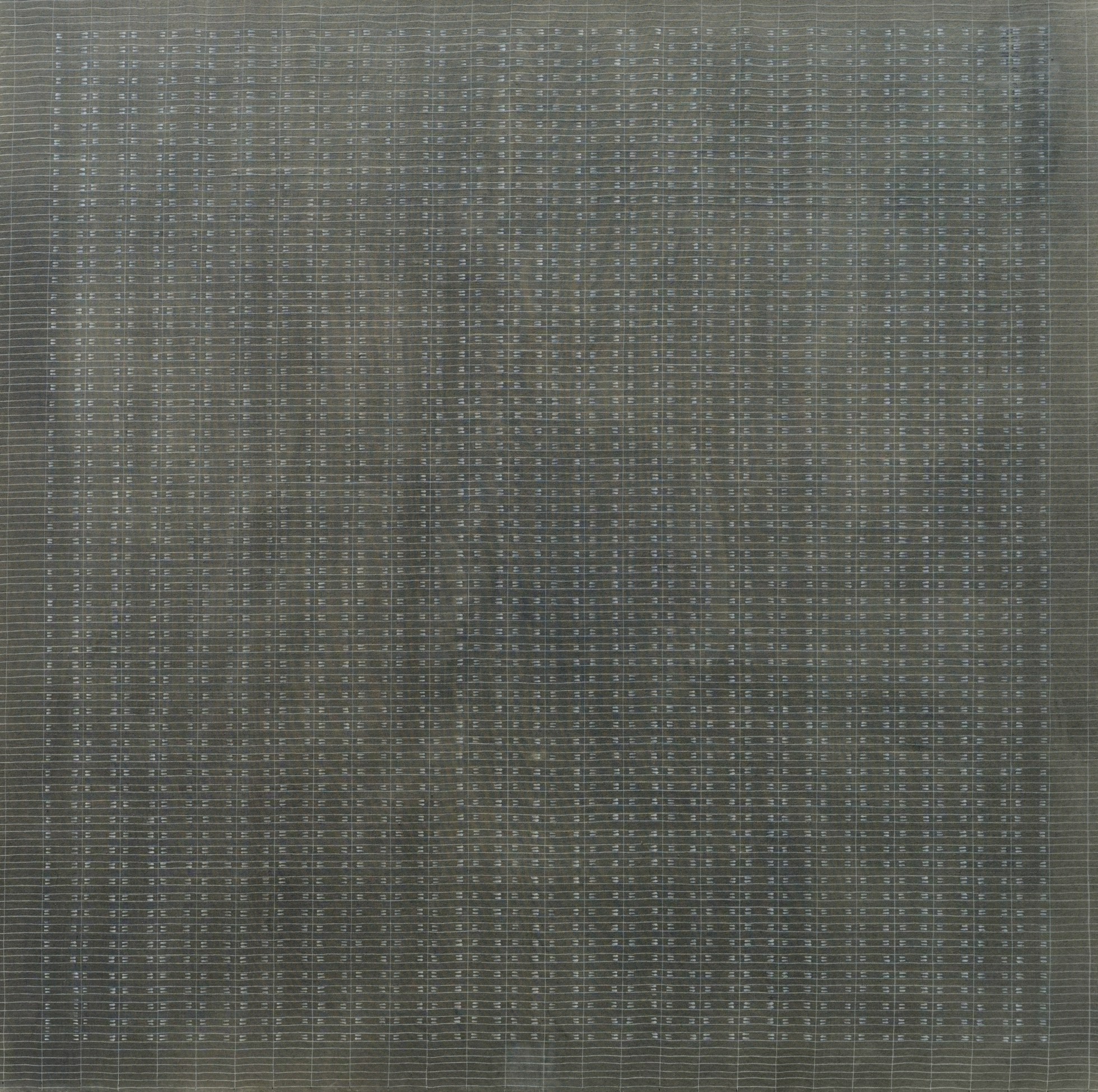

The simple grid-like designs I am creating are inspired by Agnes Martin's paintings.

Minimalist and sometimes intricate but always geometric and symmetrical, her work has been described as serene and meditative. She believed that abstract designs can illicit abstract emotions from the viewer, like happiness, love, freedom.

Code

Composition 1: "Ode to Leon's OCD" using mouseover code.

Composition 2: "Allison's Use of Vim Editor" with Kinectron code, using Shawn Van Every and Lisa Jamhoury's Kinectron app and adapting code from one of Aaron Montoya Moraga's workshops.

Composition 2 with mouseover for testing on computer.

Composition 3: "Isobel" with mouseover.

Composition 4 "Brother" with mouseover.

Composition 5. "Free Food (in the Zen Garden)"

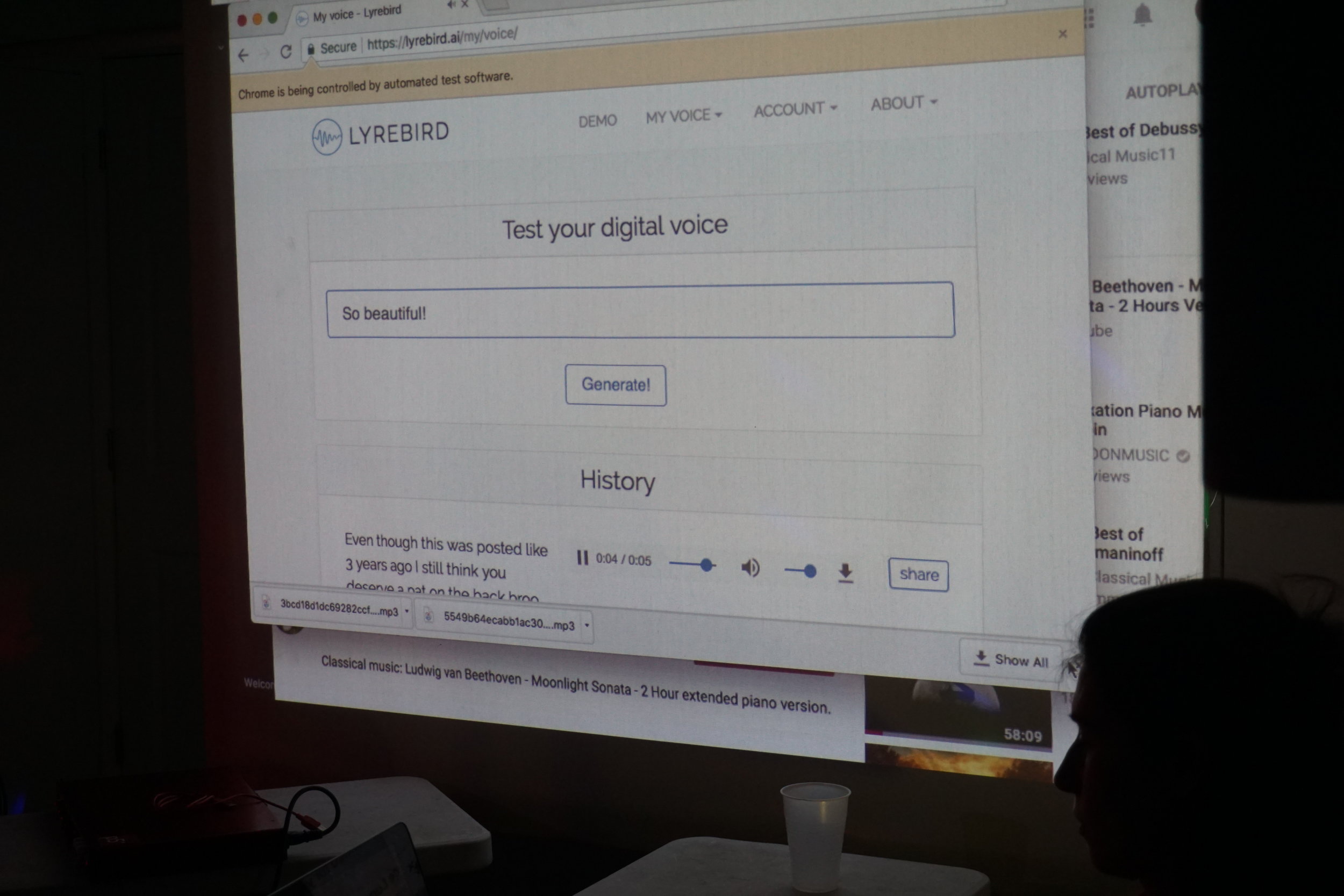

AI-generated voice that learned to sound like me reading every comment on 2-hours of Moonlight Sonata on Youtube + Reading amazon reviews of Capital vol 1

It was a pleasure performing this piece, called AI-generated voice that learned to sound like me reading every comment on 2-hours of Moonlight Sonata on Youtube, at venue called Baby Castles in New York in 2017.

\

In this project I scraped Youtube comments from a 2-hour looped version of the first part of the Moonlight Sonata and placed them into a JSON file. I then used Selenium, a Python library, to write a script that uploads the comments from a JSON file into Lyrabird, which reads the comment out-loud in my own AI-generated voice. I had previously trained Lyrabird to sound like like me, which adds to the unsettling nature of the project. I based my Selenium code off of the code that Aaron Montoya-Moraga's wrote for his automated emails project.

The code for this project can be found on github

AI-generated voice that learned to sound like me reading every comment on 2-hours of Moonlight Sonata on Youtube explores themes of loneliness, banality, and anonymity on the web. The comments read out loud give voice to those who comment on this video. The resulting portrait ranges from banal to uncomfortable to extremely personal.

The piece is meant to be performed live.

___

CAPITAL VOL 1 Reading

This is a separate but similar project that also uses Selenium.

For Capital Volume 1 I had Lyrabird simply read its Amazon reviews one by one. I'm interested in exploring online communities and how they use products, art, or music as a jumping off point for further discussion and forums for expressing their feelings and views. Often people say online things they cannot say anywhere else and it's an interesting way to examine how people view themselves and their environment.

The piece is also meant to be performed live.

Bold & Shy - Using VidPy and FFMEG

This is a video that I created using VidPy and FFpeg

VidPy, is a python video editing library developed by Sam Lavigne.

Screens, Portals, Men, and Frodo

In this video piece I sampled video footage of Steve Jobs, Star Trek TNG, Lord of the Rings, and a nature video about summer that had poetry text. All of the videos were downloaded from youtube using youtube-dl, fragmented in FFMPEG, and put together with jerky offsets using VidPy, a python script developed by Sam Lavigne.

Themes explored: men as adventurers, technology as men's realm, legendary and real iconic figures and the grey area between, male as default gender in pop culture.

Code for Video 1, A Species Goodbye:

Python:

FFmpeg:

ffmpeg -ss 00:06:18 -i jobs_640_480.mp4 -c:v copy -c:a copy -t 6 jobs_640_480_1.mp4

ffmpeg -ss 00:07:43 -i jobs_640_480.mp4 -c:v copy -c:a copy -t 5 jobs_640_480_2.mp4

ffmpeg -ss 00:07:51 -i jobs_640_480.mp4 -c:v copy -c:a copy -t 5 jobs_640_480_3.mp4

ffmpeg -ss 00:11:08 -i jobs_640_480.mp4 -c:v copy -c:a copy -t 3 jobs_640_480_4.mp4

//cutting out a portion from jobs interview that shows the screen

ffmpeg -ss 00:00:06 -i sheliak_636_480.mp4 -c:v copy -c:a copy -t 5 sheliak_636_480_1.mp4

got error in python file while runing singletrack:

Katyas-MBP:screens crashplanuser$ python screens.py

objc[40458]: Class SDLTranslatorResponder is implemented in both /Applications/Shotcut.app/Contents/MacOS/lib/libSDL2-2.0.0.dylib (0x10870af98) and /Applications/Shotcut.app/Contents/MacOS/lib/libSDL-1.2.0.dylib (0x108b6c2d8). One of the two will be used. Which one is undefined.

objc[40459]: Class SDLTranslatorResponder is implemented in both /Applications/Shotcut.app/Contents/MacOS/lib/libSDL2-2.0.0.dylib (0x110617f98) and /Applications/Shotcut.app/Contents/MacOS/lib/libSDL-1.2.0.dylib (0x110a892d8). One of the two will be used. Which one is undefined.

//get alien

ffmpeg -ss 00:00:29 -i sheliak_636_480.mp4 -c:v copy -c:a copy -t 1.5 sheliak_636_480_p.mp4

//get riker

ffmpeg -ss 00:00:38 -i holodeck.mp4 -c:v copy -c:a copy -t 10 holodeck.mp4_1.mp4

//get lotr chunk

ffmpeg -ss 00:00:34 -i lotr.mp4 -c:v copy -c:a copy -t 3 lotr_late.mp4

//couldn’t get this to make a sound